Various reports and studies attempt to capture the degree of AI development and adoption within the business sector.

The most comprehensive reports released so far include State of Polish AI 2021 and Map of Polish AI 2019. These reports offer an extensive list of Polish companies, even those with small AI teams. Unfortunately, much has changed over the past few years, and it’s a pity that these reports have not been updated.

Noteworthy is the list of Polish AI startups compiled by Dr. Przemysław Chojecki in mid-2023, which includes over 100 startups, as well as service companies—software houses—specializing in AI. A similar list of Polish startupswas created by Jan Szumada.

A much newer report is the EY Report: How Polish Companies are Implementing AI?. EY surveyed a large cross-section of companies (over 500) of varying sizes and from different sectors. The main conclusion: 20% of large and medium-sized enterprises in Poland have implemented AI-based systems, and 80% of companies that have completed AI implementation claim to have achieved benefits. Unfortunately, these results raise considerable doubts due to the lack of depth in the topic—what exactly do we mean by “AI implementation”?

Medical startups (170 from Poland) are thoroughly analyzed in the report Top Disruptors in Healthcare. This report is prepared by the AI in Health Coalition, which includes companies (such as COGITA) and other entities whose goal is to shape the application of artificial intelligence in the healthcare industry in Poland, benefiting patients and doctors alike.

A platform initiated by the ministry, ai4msp.pl, showcases many AI project implementations by Polish companies. It is intended to serve as a marketplace, although it is difficult to assess its current market significance.

Podcasts and interviews, such as Nieliniowy or 99twarzyAI, provide a fairly good overview of what is actually happening with artificial intelligence in Polish companies.

It’s worth noting that in the era of generative AI, companies are increasingly focusing on training their own staff (IT departments or others) and testing AI solutions internally rather than outsourcing work to qualified Data Scientists, who until recently had a monopoly on creating machine learning and artificial intelligence systems.

Within the Polish software companies’ association SoDA, a working group on artificial intelligence—SoDA AI Research Group—was established last year, consisting of about 100 members from 30 Polish AI companies.

Two reports presented by this group are noteworthy. Human-AI Collaboration: Perspectives for the Polish Public Sector provides examples of AI projects completed in various areas of the public sector and the challenges associated with them. Meanwhile, the report Generative AI in Business (co-created by more entities than just SoDA) focuses strictly on generative artificial intelligence and presents its potential in various industries. This report is more focused on showcasing possibilities and tools rather than presenting actual AI projects implemented.

In recent months, the Chamber AI trade chamber was also established, which currently lists 44 companies dealing with AI solutions.

The last year and a half has also seen a huge increase in interest in artificial intelligence from non-technical people, programmers who have so far been dealing with other technologies, and individuals developing their own businesses.

Various groups have created noteworthy training, communities, and platforms, such as Elephant AI, AI_Devs, Campus AI, or Building AI Products. These courses vary greatly in level and outcomes, often leading individuals to join several different trainings. Some courses have thousands of participants.

In terms of industry events, long-standing cyclical, more technical initiatives continue to grow, such as Data Science Summit, ML in PL, PyData, or warsaw.ai. However, more local initiatives for AI enthusiasts are also emerging, such as AI Breakfasts, Masovian AI Fest, or GenAI Cracow.

In this category, the SpeakLeash community, which includes over 1,000 enthusiasts united around the idea of creating a large corpus of Polish language data that is then used to train Polish LLMs, is also worth mentioning.

A great analysis of what is happening in Polish science in terms of AI is presented in the IDEAS NCBR Report “Retaining the Best – Trends in PhD Education in the Area of Artificial Intelligence”. It shows that in the last 3.5 years, only 200 PhDs in AI have been defended in Poland. This is a small number given the rapid development of this field.

It’s worth noting that in an era of rapid change driven by AI, authorities in this field are often individuals educated in other areas (philosophy, management) or celebrities. Such individuals gain a large audience with their loud marketing messages, offering their appearances at conferences, consultations, or training.

An example of a list that tried to select the most influential people in AI is the article “23 Most Influential Poles in AI” prepared by MyCompany Polska.

In the field of AI, government and international initiatives should also be mentioned. Since 2016, the development of the AI Strategy has been ongoing, with the milestone being the adoption of the “Policy for the Development of Artificial Intelligence in Poland from 2020″. However, this document went largely unnoticed in Polish business, making it difficult to assess its role in shaping AI-related reality.

It seems that the implementation of the EU AI Act, whose pre-consultations recently concluded, will play a much more significant role.

Since 2018, the Ministry of Digitization has operated a Working Group on Artificial Intelligence, but despite the broad cross-section of specialists and several reports, it is difficult to notice a broader impact of this group on AI-related reality in Poland.

Certainly, public funding programs such as Ścieżka Smart play a significant role in the development of companies, although they seem insufficient compared to the funds allocated for AI development in other countries.

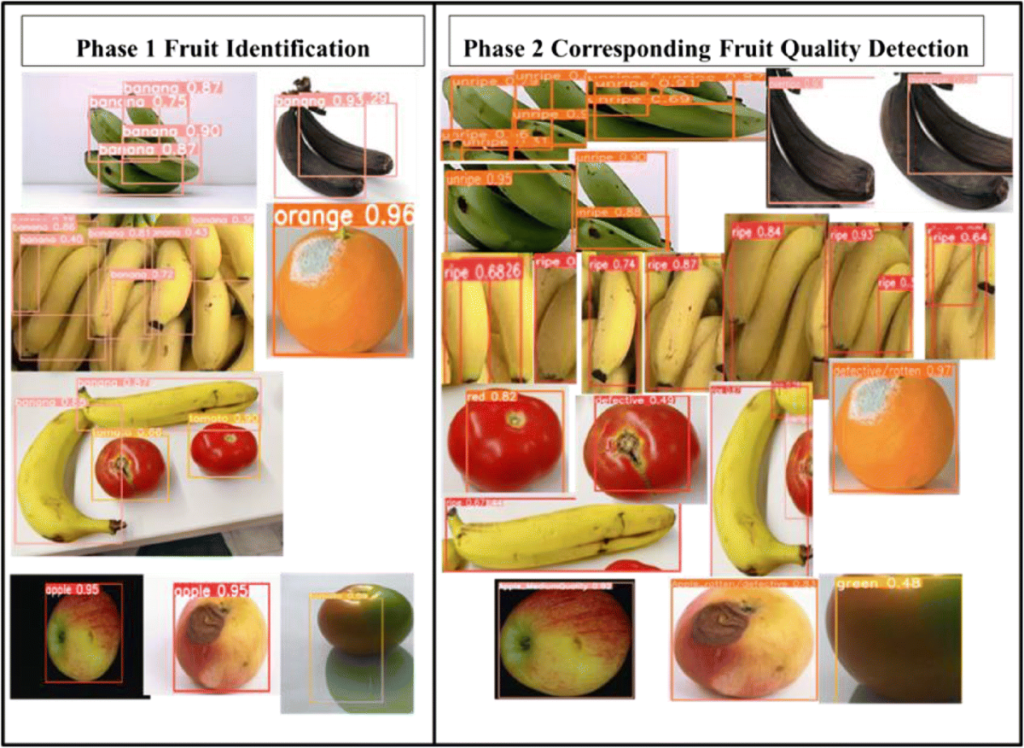

Traditional quality control methods are often time-consuming and prone to human error, leading to inefficiencies and increased costs. In this article, we present a hypothetical case study illustrating how an advanced image detection system can revolutionize quality control in the food industry, using a large fruit and vegetable processing company as an example.

The company specializes in producing jams, juices, canned vegetables, and frozen foods. It processes thousands of tons of raw materials daily on its extensive production lines. The main challenge for the company is maintaining high product quality, minimizing waste, and quickly identifying production defects, such as contamination, mechanical damage to fruits and vegetables, and non-compliance with quality standards. Traditional quality control methods proved inadequate, prompting the company to implement an AI-based automated image detection system.

The project's goal was to develop and implement an advanced AI system that would automatically inspect food products on the production line, identifying defects and damage. The system aimed to increase quality control efficiency by automating the process, reduce production waste by quickly detecting defects, and improve the overall quality of products delivered to the market, leading to higher customer satisfaction.

Three months prior to the project, the company installed cameras at key stages of the production line. Cameras were installed at the raw material intake, over sorting lines, and at packaging machines. These strategic locations allowed monitoring the quality of raw materials and products at various production stages. The cameras captured images of raw materials such as apples, tomatoes, peppers, carrots, and potatoes, which were then analyzed for defects and damage.

Over three months, the company collected about 500,000 image records, with a daily increase of about 5,000 new images. This data was stored on local servers and in the cloud, ensuring easy access to large datasets. Each record included a timestamp, location on the production line, product type, and a description of the defect if detected. During data collection, some camera methods and locations were modified to improve the quality of the collected data. Missing data was supplemented by additional photo sessions and camera configuration improvements.

Advanced AI technologies and tools such as Pytorch and OpenCV were used for system implementation. Convolutional Neural Networks (CNNs) were chosen for their effectiveness in image analysis tasks, thanks to their ability to automatically detect features and patterns. Transfer learning was also utilized, using the pre-trained YOLO model, which was adapted to the specific data of the food industry.

The image data was preprocessed using OpenCV, including color correction, lighting normalization, and noise removal. Data augmentation, such as rotation, scaling, and changes in brightness and contrast, was applied to increase the training dataset and improve model generalization. CNN models were trained on large image datasets, covering different classes of raw materials and various types of defects. The training process was accelerated using GPUs with NVIDIA CUDA technology, allowing efficient processing of large datasets.

The image detection model was deployed on AWS servers with GPU support to ensure fast image data processing. AWS EC2 servers with p3.2xlarge instances were used for scaling and system performance. A RESTful API was created using Flask, enabling communication between the image detection system and the production line. The API handled HTTP POST requests for sending images and receiving analysis results.

The API was integrated with the company's existing production system, enabling automatic image transfer from cameras to the image detection system. The production system sent images to the API in real-time, and analysis results were returned to the production system for appropriate actions, such as removing defective products from the line.

Monitoring and management tools, such as Amazon CloudWatch, were implemented to ensure continuous system availability and performance.

The system achieved 80% accuracy in detecting raw material defects, resulting in significant business benefits for the producer. The implementation reduced production waste by 20%, equivalent to 10 tons less waste per month.

The efficiency of raw material quality control increased by 50%, leading to faster and more accurate inspections. Assuming the cost of labor associated with raw material quality control is 50,000 PLN per month, the savings from increased efficiency amounted to 25,000 PLN per month.

The project involved three Data Scientists and a part-time Project Manager, lasting 4 months, covering data preparation, model training, and system deployment.

AWS servers were used for model training and image detection system deployment. Model training on EC2 p3.2xlarge instances cost 10,600 PLN for 900 hours of work. Deployment and operations on EC2 t3.large instances cost 900 PLN for 4 months of work. Data storage on Amazon S3 cost 700 PLN for 2 TB of data over 4 months, and using Amazon RDS for database storage cost 1,100 PLN.

The total project cost amounted to 513,300 PLN.

The system was monitored for availability and performance using Amazon CloudWatch, and the image detection models were regularly updated monthly to account for changes in raw materials and new defect types. An alert system informed the team of any irregularities, such as performance drops or data availability issues.

Collaboration between teams was crucial for the project's success. Regular meetings were held between the data science, IT, production, and quality management teams to discuss project progress, exchange ideas, and identify potential issues. All project stages were thoroughly documented, including technical specifications, data analysis reports, A/B test results, and recommendations for further actions. Training was conducted for the production team on using the image detection system and interpreting the results to maximize the tool's potential.

The project faced several challenges, such as ensuring system scalability to handle increasing amounts of data and raw materials, and continuously improving detection model accuracy through regular updates and algorithm optimization. Integration with various production systems and ensuring compatibility also posed challenges.

In the future, the food producer plans to expand the detection scope, introducing the detection of other defect types, such as structural deformations or color changes. Increasing automation through more advanced decision-making mechanisms on the production line and utilizing advanced analytics for predicting quality issues and optimizing production processes are the next steps in system development.

The implementation of an advanced image detection system brought significant benefits, such as reducing production waste, increasing the efficiency of raw material quality control, and improving product quality. Although the project faced some challenges, the business benefits of implementing the system were substantial, and the company plans further development and optimization of the system in the future.

This hypothetical case study shows how artificial intelligence can revolutionize the food industry, bringing tangible benefits to both producers and consumers.

Online stores must continuously tailor their offerings to the needs and preferences of customers to increase conversion rates and cart value.

Below, we will discuss step by step a hypothetical project to implement an advanced recommendation system in an electronics store, which could significantly improve sales metrics and the customer shopping experience.

An online store specializing in electronics (laptops, smartphones, TVs, and accessories) observed that many customers were leaving the site without making a purchase. Despite a wide range of products, users struggled to find items that suited their needs. As a result, the conversion rates and average cart value were lower than expected, negatively impacting the store's revenue and profitability.

The project aimed to develop and implement an advanced recommendation system to:

The project utilized various data sources crucial for the successful implementation of the recommendation system:

To ensure data quality, actions were taken to:

Data Preparation and Processing

Before building the recommendation system, several actions were taken to gain valuable business insights:

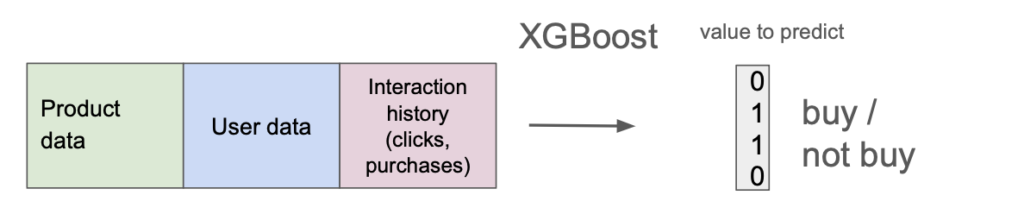

The implementation of the recommendation system utilized various models and artificial intelligence technologies:

After the system was implemented, A/B tests were conducted. The recommendation system returned its results for 50% of randomly selected users, while the rest received the usual product order (by popularity). This allowed for precise measurement of the model's quality.

The main costs of the project were the wages of the development team.

Project Duration: 2 months.

As the entire system was implemented in the cloud, additional costs included:

Total Project Cost: 303,870 PLN.

Thus, the investment paid off two weeks after the solution was implemented.

After implementing the machine learning model, it was crucial to monitor its performance and make necessary adjustments.

Monitoring was implemented using Amazon CloudWatch. Due to the introduction of new products and changing user preferences, automatic weekly model retraining was implemented. Additionally, an alert system was introduced to report anomalies.

For each recommended product, users could click a thumbs up or down to rate the recommendation's quality. This provided an additional source of information useful for retraining the AI model.

The hypothetical implementation of an advanced recommendation system in an electronics store was a key step in improving customer shopping experiences and increasing sales efficiency.

By personalizing the product offering based on user preferences, noticeable improvements were achieved in conversion rates and average cart value.

The project required integrating data from various sources and applying advanced machine learning technologies, such as XGBoost, which enabled effective processing and analysis of large volumes of data and generating accurate product recommendations in real-time.

In recent years, we have witnessed several breakthrough moments, such as the creation of the AlphaZero model in 2017, which achieved grandmaster level in chess and Go, and the development of the Transformer architecture in the same year, which underpins numerous language processing models. However, the release of the GPT-3.5 model as a chatbot, ChatGPT, on November 30, 2022, brought AI into the public eye. The use of AI has become widespread in private life and the search for business applications to save time, streamline processes, and increase profits.

It is important to note that the use of AI-based algorithms (or more precisely, machine learning) for business applications has been ongoing for at least several years. Common examples include systems recommending ads or other content to internet users, predictive systems in financial institutions, and image detection algorithms in industry. Although these algorithms continue to evolve, companies are far from fully utilizing AI's potential.

Therefore, in this article, I will not only present how to use generative artificial intelligence (such as ChatGPT or Midjourney) in your company but also describe more broadly how to approach identifying areas that can be improved by various types of AI algorithms.

It is worth starting your AI journey by reviewing the available tools. There are already quite a few, each serving very different applications. Here are some examples.

Adobe Firefly is an advanced tool for generating and editing graphics, ideal for marketing and design departments. Synthesia, on the other hand, allows you to create videos with generated voices and characters, which is a great solution for producing training materials and presentations.

ChatGPT and Gemini are versatile tools that can help you create marketing content, handle customer service, respond to emails, or generate ideas. Grammarly, in turn, helps improve grammar and writing style, which is invaluable when creating high-quality marketing content and reports.

Turbologo and Logopony are logo-generation tools that can speed up the process of creating your company's visual identity. Canva offers a wide range of design tools, including those using generative AI models. You can create high-quality graphics with Midjourney or Leonardo.ai. If you need background music, check out Suno.

Tools such as Slides AI, Presentations AI, Pitch, and Beautiful.AI enable the creation of attractive presentations with automatic formatting, which can significantly save time and improve the visual quality of presented materials.

Do not forget to use meeting transcription tools like OtterAI, and text summarization systems like SummarizeBot.

For research teams, Scite.AI offers advanced capabilities for searching scientific information and significantly speeds up the research process.

It is worth experimenting with various tools to find those that best meet the needs of your business. They will certainly increase the efficiency of your employees. However, using these tools is just the beginning of AI possibilities in your company!

You have probably heard that to obtain high-quality results from generative models, it is crucial to write appropriate prompts. Relying only on short and intuitive prompts, you will quickly find that the responses generated by the tools are quite standard and repetitive. They are likely not to meet your company's needs.

Therefore, the next step will be to try and experiment with different prompts. This will help you better understand the capabilities and limitations of AI systems. You will also quickly learn the basic characteristics of AI algorithms:

After initial trials, which are more for fun than serious applications, you can start creating more advanced (yet still quick to build) AI solutions for your business.

This could involve creating a good prompt (i.e., an instruction for the model) and saving it for repeated use. For example, this could be an instruction for social media posts, where you define your brand's style, requirements for post length, and possible use of emojis, or hashtags.

In many tools (e.g., in Chat GPT), you can also upload files in various formats—whether spreadsheets (XLSX), documents (PDF, DOCX), or images (JPG). In this way, you will build agents for managing knowledge in your company, for instance, regarding employee information or quickly searching for information to respond to a client.

Generative models represent just a small part of the wide array of AI algorithms available. Likely, they do not fully leverage the potential inherent in the data collected by your company. Therefore, it is worthwhile to explore other classes of models to consider their use in your business.

Remember, using these models typically requires building a custom AI system by a Data Science team. Alternatively, you can opt for low-code or no-code tools where individuals without advanced programming skills can also create customized AI systems.

The most important types of non-generative ("traditional") AI include:

These algorithms typically take images or videos as input. Their outputs can range from classification and object detection (identifying objects using colored rectangles, for instance) to semantic segmentation—detecting entire areas of an image and labeling pixels representing specific objects.

Example of object detection and segmentation: (https://manipulation.csail.mit.edu/segmentation.html)

Image processing algorithms find wide applications across various industries. In manufacturing, they are used for quality control, monitoring production processes, and resource management. In medical diagnostics, they enable the analysis of X-ray images, MRIs, and other scans, aiding in the rapid detection of diseases and anomalies. Retail utilizes these technologies for customer behavior analysis, inventory management, and personalized offers.

Crucial in e-commerce, streaming services, and digital marketing, recommendation systems analyze user preferences and behaviors to provide personalized recommendations for products, content, or services, thereby enhancing customer engagement and loyalty.

Predictive algorithms analyze historical data to forecast future events, invaluable in sectors such as finance, logistics, and resource management. Clustering algorithms help identify patterns and segment data, beneficial in market analysis and customer management.

Optimization systems use AI algorithms to manage processes, resources, and logistics in the most efficient manner possible. They can significantly reduce operational costs and increase business efficiency. Examples include warehouse management, route planning for deliveries, production scheduling, machine and resource utilization optimization, and waste reduction.

Exploring these diverse AI capabilities can provide insights into how they can be effectively integrated into your business processes, enhancing productivity and decision-making across various domains.

Harnessing the potential of AI in your company can greatly benefit from conducting exploratory workshops and cultivating an AI culture. These initiatives help teams better understand the capabilities offered by AI and how these technologies can be adapted to specific business needs.

Exploratory workshops are interactive sessions designed to identify areas within the company where artificial intelligence can bring the greatest benefits. During these workshops, employees from different departments collaborate to understand which problems can be solved using AI and what new opportunities AI can open up.

The introduction to workshops typically begins with a review of basic AI concepts, allowing participants to better grasp the concepts and terminology. Subsequently, specific use cases of AI in industries similar to your company's are analyzed. In the next stage, participants identify their own business challenges and needs that can be addressed using AI, and then collectively develop initial ideas for solutions.

Building an AI culture within the company also requires creating an environment that supports innovation and continuous learning. It is crucial to promote openness to new technologies and encourage employees to experiment with AI tools. Additionally, company leaders should actively support AI-related initiatives and lead by example through involvement in AI implementation projects.

Customized AI solutions typically require much larger financial investments compared to simple AI tools, which are often free or cost a few hundred złotys per month. The cost of developing such solutions can range from tens to hundreds of thousands of złotys.

However, if you have a well-defined problem and a Proof-of-Concept that demonstrates the AI system meets your expectations, the benefits can appear quickly in the form of increased sales by several to several dozen percent, savings on losses, or savings of thousands of work hours for your employees.

Artificial intelligence in business encompasses a wide range of approaches. You can start with initial attempts on your own using ready-made tools that are free or very low cost. However, if you're looking for something more advanced and tailored to your company's specific needs, you should be prepared for higher costs and seek the assistance of technical experts. If you have any questions or would like to discuss your ideas further, feel free to reach out!

But as models have gotten more complex, it can be difficult to know what is causing them to make certain predictions. That is why we observe fast increase of interpretability tools such as SHAP or DALEX.

In this article, I will discuss some reasons why interpretability is so important. There are more reasons than you could expect and some of them are very not-obvious.

One of the most important aspects of interpretability is that it allows you to gain confidence in your model and be sure that it does what it was supposed to do.

The first step of assessing model quality is properly-defined metric, depending on the application — it can be e.g. accuracy, f1 score or MAPE. However, even if you have chosen proper metrics, it can be computed in wrong way or can be non-informative. Hence, usually we can achive the highest confidence about the model quality only by understanding what it is doing and why give such predictions.

In Machine Learning projects, trust is the foundation. Without trust, you cannot build relationships or collaborations. Trust allows you to work together and share data, knowledge, and expertise. It also allows you to continue working with each other in the future.

In order for your model to be interpretable and trustworthy, it needs to have clear explanations of what it does and why it does it. The more transparent you can make your model’s output, the more likely it will be that others will trust your results — and want to work with you again!

Understanding the model and how it works is extremely important when the model’s output is below your expectations and you start to find out what is going on. If you do not understand the model, then it is very hard to debug if something goes wrong with your prediction results.

For example, if an algorithm does not give accurate predictions for some data points in a test set but does well on other data points, maybe good datapoints are from the same distribution that was in the training set. When you understand on which features your model looks at most, you can find that maybe wrong datapoints are outliers from the perspective of these features. Or maybe there are some missing features in these points.

Thanks to interpretability, you can know which features are important for your model, and propose simpler alternative models which have similar predictive power.

For example, suppose that you have a classification problem with thousands of features and you discover that only 10% of them are significant in predicting customer churn. Now you know which features matter and which do not, so it may be possible to remove unimportant features and train your model again. It will be faster, because it has less parameters. But also the process of data gathering and preprocessing will be simpler.

The tools for model explainability show us features that affect the model’s output the most. Some relations among features and correlation with the output can be very intuitive and known to experts, e.g. if you are trying to predict whether or not a customer will default on a loan, the history of their repayment can be an important factor. However, models very often detect correlation previously unexplored by human. Thanks to it, human decisions can be better in the future, even if you will not decide to replace them with the model.

If a model is being used to determine whether or not you can get approved for something (a loan, medical insurance, etc.), then it is important to be sure that the model makes sense and provides an accurate answer. If the decision is based on an opaque black box, you may have no idea why one person was approved and another was not. This lack of transparency also means that it will be difficult to prove that the model is working properly in court if there are issues with its predictions going forward.

Having an interpretable model is important for being sure that the model works well and for building trust between you and your end users. It is also important for debugging the model, which can help you quickly isolate issues and fix them before deploying the model in production.

Model explaination is useful also if you will not use the current version of your model — by enabling building simpler alternative or by extending kowledge of human experts and improving their decisions.

Finally, if you are going through regulatory compliance checks, having an interpretable version of your model will make these procedures much easier.

I hope you have found this article useful in your data science journey. Please join us on our blog for more articles!

The project aims to develop an advanced system that enables the detection of faults, disturbances, and other issues in the electrical infrastructure, thereby improving its safety and efficiency.

1. Data Collection from Cameras:

Recorded hundreds of hours of video footage from drones, focusing on electrical transmission lines.

2. Data Labeling:

Annotators labeled the data, marking various areas for monitoring, including:

3. Utilization of Pretrained AI Algorithms:

Pretrained models based on deep learning and Convolutional Neural Networks (CNN) were employed.

4. Algorithm Fine-Tuning:

Algorithms were fine-tuned for predicting disturbances in the electrical infrastructure through training on labeled data.

5. Offline Experiments on Historical Data:

Achieved an accuracy of 92% on historical data, serving as a starting point for further improvements.

6. Data Collection and Algorithm Validation:

Additional data was gathered and used to evaluate the algorithm, achieving an accuracy of 89.5%.

7. Iterations to Improve the Algorithm:

Ensemble techniques were applied, combining results from different models, resulting in a 94% accuracy.

8. Work on AI Model Size Reduction:

To enable deployment on target devices, the model was optimized while preserving its effectiveness.

9. Pilot - Algorithm Deployment in Two Test Locations:

The algorithm was tested in real-world conditions at two locations, allowing assessment and adaptation to different terrain conditions.

10. Generating Drone Flight Reports:

The algorithm was integrated with drones, enabling the generation of reports with monitoring results after each drone flight.

The total project cost was 800,000 PLN, distributed as follows: